by sid drmay

My initial project idea for Dinacon this year was based on wanting to play with ideas of a monster that is controlled by a brain fungus. I thought this would exist as a game of sorts or involved working with Dinasaurs to build different visual experiences with how the monster would look however due to some constraints I ended up being the solo subject.

I was inspired by Madeline Schwartzman Face Nature projects, seeing all the ways that she uses plants to change her face and express herself has been one of my favourite things.

Batticaloa is full of the most incredible plants, growing all over in a beautiful chaos. The way we were surrounded by unkempt growth around Batticaloa and maintained growth at Riviera was inspiring.

Wandering through the area I found these bright pink petals that had a soft, paper lantern like texture. I knew I wanted to use them immediately. They maintain their colour so wonderfully as they die, becoming lighter pink but still more vibrant than I’ve seen other flowers. Their paper-like qualities become more obvious as they dry out, instead of rotting like other flowers do.

I also utilized turkey tail mushrooms I was able to find near petrified on branches used as fencing outside of some of the homes. I searched for branching pieces of coral on the beach to add further texture and ambiguous origins to the monster. I gathered all the plants, flowers, leaves and coral that I liked the aesthetics of and proceeded to design a headpiece that was the majority of the costume. After that I experimented with a couple iterations of how the monster could look, and found that I really enjoyed using petals to make a scale-like situation down the face that grew with each version.

Making a monster is many things, but especially knowing that monsters are not always monstrous. Monsters can be beautiful.

This was something I wanted to utilize, I wanted to show all the beautiful plants and colours that can be found in Batticaloa. Creating tiers of leaves and flowers combined to look like a kind of rippling outer skin. Vines and tendrils swooping down, around the face and body.

My monster is dripping with layers, flowing down the face, on to the neck and down the body. The leaves, petals and mushrooms work together to create a beautiful, monstrous being that is being taken over by the plants around them.

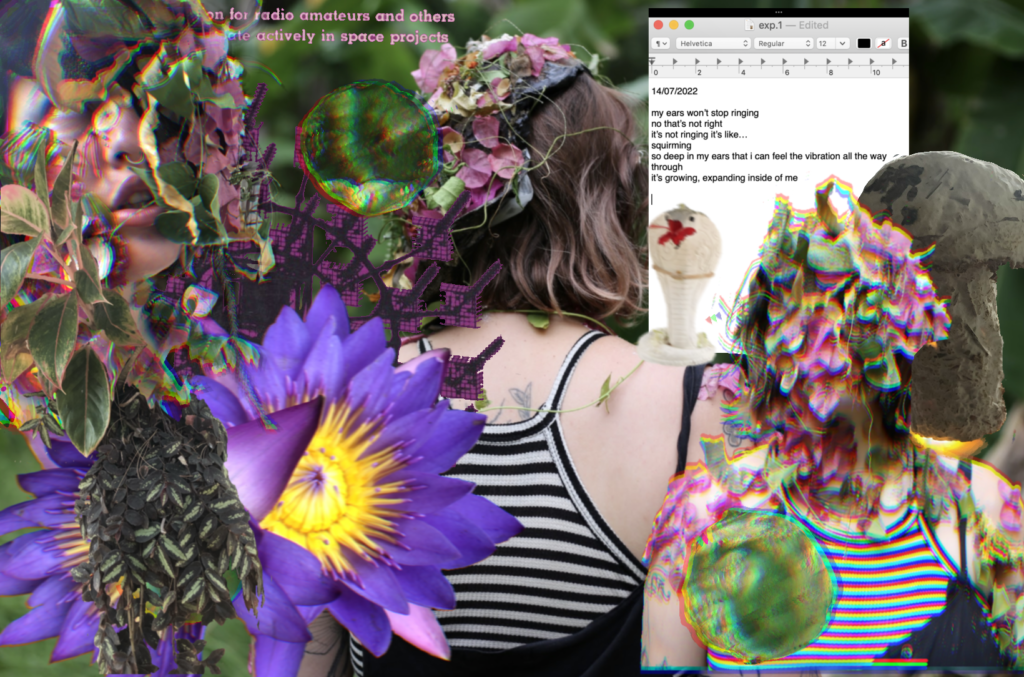

Once I had assembled the monster’s look I got photos taken by Luci Dayhew (thanks Luci!!) that I was able to use as the base of the collages. After that I spent time combing through my photos from Batticaloa and pulled elements that spoke to me and arranged them with the monster pictures. The final digital collages can be read as three panels of a story showing the progression of the fungus monster taking over the human brain.